A helper library to interact with Arize AI APIs.

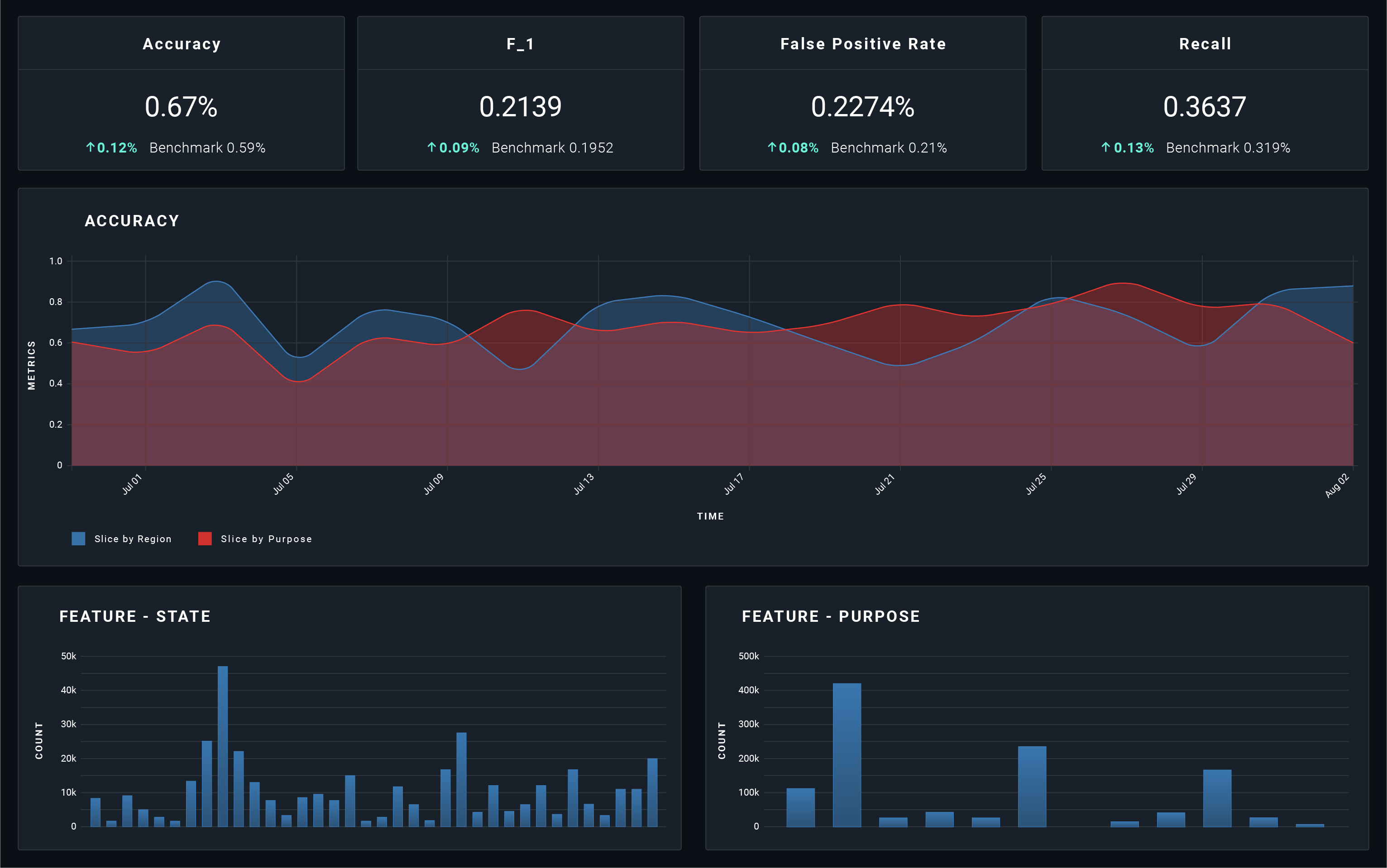

Arize is an end-to-end ML observability and model monitoring platform. The platform is designed to help ML engineers and data science practitioners surface and fix issues with ML models in production faster with:

- Automated ML monitoring and model monitoring

- Workflows to troubleshoot model performance

- Real-time visualizations for model performance monitoring, data quality monitoring, and drift monitoring

- Model prediction cohort analysis

- Pre-deployment model validation

- Integrated model explainability

This guide will help you instrument your code to log observability data for model monitoring and ML observability. The types of data supported include prediction labels, human readable/debuggable model features and tags, actual labels (once the ground truth is learned), and other model-related data. Logging model data allows you to generate powerful visualizations in the Arize platform to better monitor model performance, understand issues that arise, and debug your model's behavior. Additionally, Arize provides data quality monitoring, data drift detection, and performance management of your production models.

Start logging your model data with the following steps:

Sign up for a free account by filling out the web form.

When you create an account, we generate a service API key. You will need this API Key and your Space Key for logging authentication.

If you are using the Arize python client, add a few lines to your code to log predictions and actuals. Logs are sent to Arize asynchronously.

Install the Arize library in an environment using Python >= 3.6.

$ pip3 install arizeOr clone the repo:

$ git clone https://github.com/Arize-ai/client_python.git

$ python3 -m pip install client_python/Initialize the arize client at the start of your service using your previously created API and Space Keys.

NOTE: We strongly suggest storing the API key as a secret or an environment variable.

from arize.api import Client

from arize.utils.types import ModelTypes, Environments

API_KEY = os.environ.get('ARIZE_API_KEY') #If passing api_key via env vars

arize_client = Client(space_key='ARIZE_SPACE_KEY', api_key=API_KEY)For a single real-time prediction, you can track all input features used at prediction time by logging them via a key:value dictionary.

features = {

'state': 'ca',

'city': 'berkeley',

'merchant_name': 'Peets Coffee',

'pos_approved': True,

'item_count': 10,

'merchant_type': 'coffee shop',

'charge_amount': 20.11,

}When dealing with bulk predictions, you can pass in input features, prediction/actual labels, and prediction_ids for more than one prediction via a Pandas Dataframe where df.columns contain feature names.

## e.g. labels from a CSV. Labels must be 2-D data frames where df.columns correspond to the label name

features_df = pd.read_csv('path/to/file.csv')

prediction_labels_df = pd.DataFrame(np.random.randint(1, 100, size=(features.shape[0], 1)))

ids_df = pd.DataFrame([str(uuid.uuid4()) for _ in range(len(prediction_labels.index))])## Returns an array of concurrent.futures.Future

pred = arize.log(

model_id='sample-model-1',

model_version='v1.23.64',

model_type=ModelTypes.NUMERIC,

prediction_id='plED4eERDCasd9797ca34',

prediction_label=True,

features=features,

)

#### To confirm that the log request completed successfully, await for it to resolve:

## NB: This is a blocking call

response = pred.get()

res = response.result()

if res.status_code != 200:

print(f'future failed with response code {res.status_code}, {res.text}')The client's log_prediction/actual function returns a single concurrent future. To capture the logging response, you can await the resolved futures. If you desire a fire-and-forget pattern, you can disregard the responses altogether.

We automatically discover new models logged over time based on the model ID sent on each prediction.

NOTE: Notice the prediction_id passed in matches the original prediction sent on the previous example above.

response = arize.log(

model_id='sample-model-1',

model_type=ModelTypes.NUMERIC,

prediction_id='plED4eERDCasd9797ca34',

actual_label=False

)Once the actual labels (ground truth) for your predictions have been determined, you can send them to Arize and evaluate your metrics over time. The prediction id for one prediction links to its corresponding actual label so it's important to note those must be the same when matching events.

Use arize.pandas.logger to publish a dataframe with the features, predicted label, actual, and/or SHAP to Arize for monitoring, analysis, and explainability.

from arize.pandas.logger import Client, Schema

from arize.utils.types import ModelTypes, Environments

API_KEY = os.environ.get('ARIZE_API_KEY') #If passing api_key via env vars

arize_client = Client(space_key='ARIZE_SPACE_KEY', api_key=API_KEY)response = arize_client.log(

dataframe=your_sample_df,

model_id="fraud-model",

model_version="1.0",

model_type=ModelTypes.SCORE_CATEGORICAL,

environment=Environments.PRODUCTION,

schema = Schema(

prediction_id_column_name="prediction_id",

timestamp_column_name="prediction_ts",

prediction_label_column_name="prediction_label",

prediction_score_column_name="prediction_score",

feature_column_names=feature_cols,

)

)

response = arize_client.log(

dataframe=your_sample_df,

model_id=model_id,

model_type=ModelTypes.SCORE_CATEGORICAL,

environment=Environments.PRODUCTION,

schema = Schema(

prediction_id_column_name="prediction_id",

actual_label_column_name="actual_label",

)

)response = arize_client.log(

dataframe=your_sample_df,

model_id="fraud-model",

model_version="1.0",

model_type=ModelTypes.NUMERIC,

environment=Environments.PRODUCTION,

schema = Schema(

prediction_id_column_name="prediction_id",

timestamp_column_name="prediction_ts",

prediction_label_column_name="prediction_label",

actual_label_column_name="actual_label",

feature_column_names=feature_col_name,

shap_values_column_names=dict(zip(feature_col_name, shap_col_name))

)

)That's it! Once your service is deployed and predictions are logged you'll be able to log into your Arize account and dive into your data, slicing it by features, tags, models, time, etc.

Log feature importance in SHAP values to the Arize platform to explain your model's predictions. By logging SHAP values you gain the ability to view the global feature importances of your predictions as well as the ability to perform cohort and prediction based analysis to compare feature importance values under varying conditions. For more information on SHAP and how to use SHAP with Arize, check out our SHAP documentation.

If you are using a different language, you'll be able to post an HTTP request to our Arize edge-servers to log your events.

curl -X POST -H "Authorization: YOU_API_KEY" "https://log.arize.com/v1/log" -d'{"space_key": "YOUR_SPACE_KEY", "model_id": "test_model_1", "prediction_id":"test100", "prediction":{"model_version": "v1.23.64", "features":{"state":{"string": "CO"}, "item_count":{"int": 10}, "charge_amt":{"float": 12.34}, "physical_card":{"string": true}}, "prediction_label": {"binary": false}}}'Visit Us At: https://arize.com/model-monitoring/

Official Documentations: https://docs.arize.com/arize/

- What is ML observability?

- Playbook to model monitoring in production

- Using statistical distance metrics for ML monitoring and observability

- ML infrastructure tools for data preparation

- ML infrastructure tools for model building

- ML infrastructure tools for production

- ML infrastructure tools for model deployment and model serving

- ML infrastructure tools for ML monitoring and observability

Visit the Arize Blog and Resource Center for more resources on ML observability and model monitoring.